Doctors Got 2% of the Views

1,522 hantavirus videos were watched over a billion times in 18 days. Doctors accounted for 2% of the views. The algorithm decided the rest.

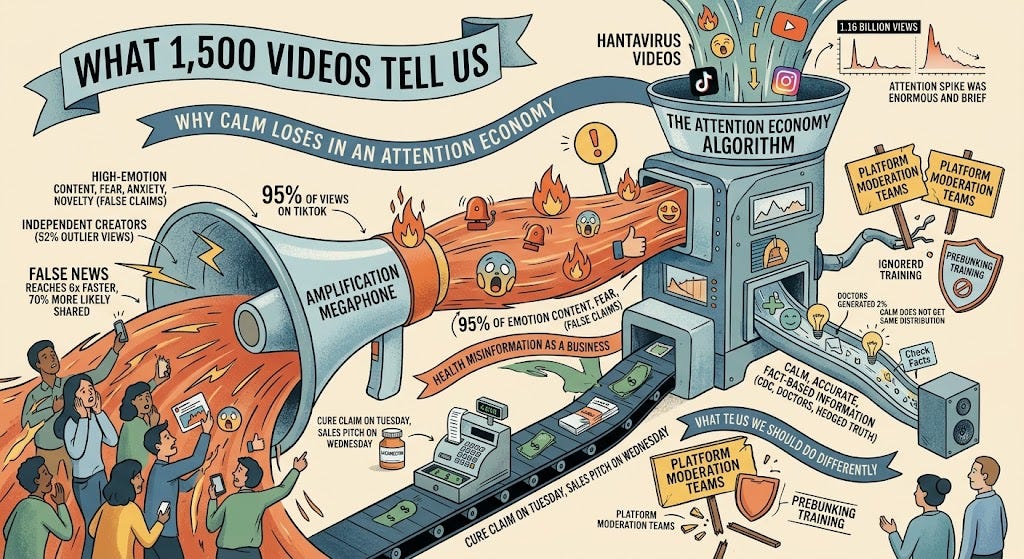

What 1,500 videos tell us

In the 18 days between April 24th and May 12th, an analysis of over 1,500 hantavirus videos identified across TikTok, Instagram, and YouTube found they were watched 1.16 billion times. TikTok alone accounted for 95% of those views. The attention spike was enormous and brief: 128 million views on May 4th, the day the first deaths were reported, then a steep decline — down to 4.8 million views by May 12th. Most of the audience had moved on before the quarantine in Nebraska even started.

Independent creators, not news organizations, generated 52% of the outlier views, over 258 million. Doctors generated 2%. Videos tagged with fear and anxiety themes pulled in 69 million views. Videos explicitly comparing hantavirus to COVID, 88 of them, drew 27 million. The content that performed best was emotional, not informational.

Why calm loses in an attention economy

TikTok doesn’t distribute content based on who you follow. It distributes based on what keeps you watching. A 653-follower account posted a hantavirus video and got 487,000 views. A 14,200-follower account got 3.2 million. The algorithm doesn’t know or care whether the person talking is an infectious disease specialist or someone who made their first video that morning. It rewards content that triggers emotion. Calm, accurate content does not get the same distribution.

Dr. Rubin, an allergist with 2.2 million TikTok followers, posted a reassuring “Do I need to panic?” video that got 5.7 million views — one of the top-performing doctor videos in the dataset. But that was one video against a flood of hundreds.

A study in Science quantified the structural problem: false news reaches people about six times faster than true information and is 70% more likely to be shared. The driver is novelty. False claims feel new and emotionally charged. True information tends to be hedged and careful. In an attention economy, hedged loses.

This is the environment in which about half of Americans under 50 now get health information from influencers and podcasts. The first voice many people heard about hantavirus was not the CDC. It was whoever the algorithm served them.

Cure claim on Tuesday, sales pitch on Wednesday

On May 7th, a Houston ENT doctor named Mary Talley Bowden posted on X that ivermectin “should work” against hantavirus. The post reached 3.5 million views. Former Rep. Marjorie Taylor Greene amplified it, recommending ivermectin, vitamin D, and zinc to her followers. The next day, Bowden posted that she’d be selling ivermectin to Texans for up to $110, no prescription needed.

The claim is wrong on basic biology — hantavirus replicates in the cytoplasm, not the nucleus, so ivermectin’s proposed mechanism doesn’t apply. Four physicians told PolitiFact they knew of zero evidence for ivermectin against any hantavirus. But Bowden didn’t need to be right. She needed to be first.

This is health misinformation as a business. The Carter Center estimated that advertisers spend roughly $2.6 billion a year on misinformation websites. NPR found that during COVID, 147 key anti-vaccine accounts grew their followings by at least 25% and now monetize through subscriptions, supplements, and speaking fees. Bowden was reprimanded by the Texas Medical Board in 2025 for prescribing ivermectin at a hospital where she didn’t have privileges. She built her audience during COVID. Every new health scare is a new product launch. Cure claim on Tuesday, sales pitch on Wednesday.

What the data tells us we should do differently

The usual answer is fact-checking, but doing so ahead of time. But a 2024 review of outbreak communication found that reactive fact-checking arrives too late in most cases. The false claim has already shaped beliefs by the time the correction is published. Three randomized trials published in 2025 found that teaching people to recognize manipulation techniques before they encounter specific claims, also known as prebunking, reduced susceptibility by 20-30%.

But even if prebunking works at the individual level, the platform architecture still funnels attention toward content that triggers emotion rather than informs. And the platforms that once created friction against false claims dismantled their health misinformation teams between 2022 and 2024.

The hantavirus outbreak involves fewer than 20 confirmed cases worldwide. In the same period, over 1,500 videos about it were watched over a billion times. The problem is not that bad actors post false claims. It's that the platforms amplify them. The moderation teams that once pushed back were dismantled. The public health agencies that should be posting were never on TikTok to begin with.